If you want to add a new Oracle Authenticator to your Oracle account, the magic happens when you visit the website https://signon.oracle.com/ and this website will allow you to add and remove 2 factor authentication

HP-UX – /var is filling up and found /var/stm/logs/os

Filesystem /var is filling up and found under /var/stm/logs/os several files.

You need to keep the following files:

memlog

logXXXX.raw.cur

ccbootlog

I deleted several logXXXX.raw files to recover disk space

RHEL6 – mkfs.ext4: Size of device too big to be expressed in 32 bits

root@linux:~ # mkfs.ext4 -T largefile /dev/filelibraryvg/filelibrarylv

mke2fs 1.41.12 (17-May-2010)

mkfs.ext4: Size of device /dev/filelibraryvg/filelibrarylv too big to be expressed in 32 bits

using a blocksize of 4096.

EXT4 has a maximum supported size of 16TB – Why am I unable to format a volume bigger than 16TB using the EXT4 filesystem?

Need to create the filesystem using another filesystem type

Veeam – Resource deadlock avoided

Logs located under /var/log/veeam/Backup/

root@linux:/var/log/veeam/Backup/VM_GRP_UX_AGENT_DAILY_LINUXMW006_0200 – linux/Session_20200515_075014_{53ca5d5c-5fb9-4455-b21e-8580c1d9fc0d} # cat Job.log

[15.05.2020 07:50:26] vmb | [SessionLog][processing] Creating volume snapshot.

[15.05.2020 07:50:38] lpbcore| WARN|Method invocation was not finalized. Method id [1]. Class: [lpbcorelib::interaction::ISnapshotOperation]

[15.05.2020 07:50:38] lpbcore| ERR |Resource deadlock avoided

[15.05.2020 07:50:38] lpbcore| >> |Failed to execute IOCTL_SNAPSHOT_CREATE.

[15.05.2020 07:50:38] lpbcore| >> |–tr:Cannot create snapshot

[15.05.2020 07:50:38] lpbcore| >> |–tr:Failed to create machine snapshot

[15.05.2020 07:50:38] lpbcore| >> |–tr:Failed to finish snapshot creation process.

[15.05.2020 07:50:38] lpbcore| >> |–tr:Failed to execute method [1] for class [lpbcorelib::interaction::proxystub::CSnapshotOperationStub].

[15.05.2020 07:50:38] lpbcore| >> |–tr:Failed to invoke method [1] in class [lpbcorelib::interaction::ISnapshotOperation].

[15.05.2020 07:50:38] lpbcore| >> |An exception was thrown from thread [140159715555072].

[15.05.2020 07:50:38] lpbcore| ERR |Failed to execute thaw script with status: fail.

[15.05.2020 07:50:38] vmb | [SessionLog][error] Failed to create volume snapshot.

[15.05.2020 07:50:38] lpbcore| Taking snapshot. Failed.

[15.05.2020 07:50:38] | Thread finished. Role: ‘Session checker for Job: {98481a6f-28b7-4a82-befa-6b69a61824f3}.’

Checking this page it requests to check the kernel version and package veeamsnap

https://forums.veeam.com/veeam-agent-for-linux-f41/failed-to-create-volume-snapshot-on-centos-t36414.html

root@linux:~ # lsmod | grep veeam

veeamsnap 147050 0root@linux:~ # rpm -qa | grep veeamsnap

kmod-veeamsnap-3.0.2.1185-1.el7.x86_64

kmod-veeamsnap-3.0.2.1185-2.6.32_131.0.15.el6.x86_64root@linux:~ # uname -r

3.10.0-1062.9.1.el7.x86_64

Linux – umount: /filesystem: device is busy. No processes found using lsof and fuser

root@linux:~ # df -hP | grep SCR

/dev/mapper/vgSAPlocal-lv_sapmnt_SCR 15G 2.0G 12G 15% /sapmnt/SCR

scsscr:/export/sapmnt/SCR/exe 4.2G 3.5G 473M 89% /sapmnt/SCR/exe

scsscr:/export/sapmnt/SCR/global 4.2G 362M 3.6G 9% /sapmnt/SCR/global

scsscr:/export/sapmnt/SCR/profile 2.0G 3.0M 1.9G 1% /sapmnt/SCR/profile

I unmounted the filesystems under /sapmnt/SCR

root@linux:~ # umount /sapmnt/SCR/exe /sapmnt/SCR/global /sapmnt/SCR/profile

root@linux:~ #

But I was unable to unmount /sapmnt/SCR

root@linux:~ # umount /sapmnt/SCR

umount: /sapmnt/SCR: device is busy.

(In some cases useful info about processes that use

the device is found by lsof(8) or fuser(1))

After using fuser and lsof, it didn’t find any processes.

I was able to unmount it after restarting the autofs service

root@linux:~ # service autofs restart

Stopping automount: [ OK ]

Starting automount: [ OK ]

VMware Tools: Failed to install the pre-built module

I have never seen this error before.

I’ll try to research this error later

Before running VMware Tools for the first time, you need to configure it by

invoking the following command: “/usr/bin/vmware-config-tools.pl”. Do you want

this program to invoke the command for you now? [yes]INPUT: [yes] default

Initializing…

Making sure services for VMware Tools are stopped.

Found a compatible pre-built module for vmci. Installing it…

Failed to install the vmci pre-built module.

The communication service is used in addition to the standard communication

between the guest and the host. The rest of the software provided by VMware

Tools is designed to work independently of this feature.

If you wish to have the VMCI feature, you can install the driver by running

vmware-config-tools.pl again after making sure that gcc, binutils, make and the

kernel sources for your running kernel are installed on your machine. These

packages are available on your distribution’s installation CD.

[ Press Enter key to continue ]INPUT: [ Press Enter key to continue ] default

Found a compatible pre-built module for vsock. Installing it…

Failed to install the vsock pre-built module.

UXMON: mpatho – Only one FC Switch detected, no SAN FC Switch redundancy

After receiving the information that the error was caused by storage node reboot, checking if HPOM still show the error

root@linux:~ # /var/opt/OV/bin/instrumentation/UXMONbroker -check mpmon

Thu May 14 10:34:20 2020 : INFO : UXMONmpmon is running now, pid=31809

Thu May 14 10:34:20 2020 : Minor: mpatho – Only one FC Switch detected, no SAN FC Switch redundancy

mv: `/dev/null’ and `/dev/null’ are the same file

Thu May 14 10:34:20 2020 : INFO : UXMONmpmon end, pid=31809

Checking multipath for mpatho

root@linux:~ # multipath -ll mpatho

mpatho (350002ac666af374b) dm-74 3PARdata,VV

size=150G features=’1 queue_if_no_path’ hwhandler=’0′ wp=rw

`-+- policy=’round-robin 0′ prio=1 status=active

|- 2:0:0:5 sday 67:32 active ready running

`- 4:0:0:5 sdax 67:16 active ready running

Rescaning server

root@linux:~ # systool -av -c fc_host | grep “Class Device =” | awk -F’=’ {‘print $2’} | awk -F'”‘ {‘print “echo \”- – -\” > /sys/class/scsi_host/”$2″/scan”‘} | bash

Checking multipath for mpatho

root@linux:~ # multipath -ll mpatho

mpatho (350002ac666af374b) dm-74 3PARdata,VV

size=150G features=’1 queue_if_no_path’ hwhandler=’0′ wp=rw

`-+- policy=’round-robin 0′ prio=1 status=active

|- 2:0:0:5 sday 67:32 active ready running

|- 4:0:0:5 sdax 67:16 active ready running

|- 1:0:0:5 sdaz 67:48 active ready running

`- 3:0:0:5 sdba 67:64 active ready running

Checking if HPOM still show the error

root@linux:~ # /var/opt/OV/bin/instrumentation/UXMONbroker -check mpmon

Thu May 14 10:36:26 2020 : INFO : UXMONmpmon is running now, pid=37696

mv: `/dev/null’ and `/dev/null’ are the same file

Thu May 14 10:36:26 2020 : INFO : UXMONmpmon end, pid=37696

HPE Service Guard for Linux: Cannot halt while it is waiting to relocate

root@linux01:~ # cmhaltpkg -n linux01 -f infraMP0

Cannot halt infraMP0 while it is failing

root@linux01:~ # cmhaltpkg -n linux01 -f mdmMP0 dbMP0 transMP0

Cannot halt mdmMP0 while it is waiting to relocate

Cannot halt dbMP0 while it is waiting to relocate

Cannot halt transMP0 while it is waiting to relocate

I had to kill the processes being used by mp0adm user

Then it relocated all the packages automatically to other node

Running Appcollect

Please generate ADU report

Download AppCollectv3.2.tar.gz from the FTP drop box and copy to /tmp directory and execute following commands:

# cd /tmp

# tar -Pzxvf AppCollectv3.2.tar.gz

# /hp/support/tools/AppCollect

root@linux:/tmp # tar -Pzxvf AppCollectv3.2.tar.gz

/hp/

/hp/support/

/hp/support/tools/

/hp/support/tools/AppCollect

/hp/support/tools/conrep.xml

/hp/support/tools/conrep

/hp/support/tools/sginfo

/hp/support/tools/iml.xml

/hp/support/tools/ilo.xml

/hp/support/tools/AppCollect_v3.2_README.txt

/hp/support/tools/uc_v2.0I_SP4.tar.gz

/hp/support/tools/supportutils-3.0-44.3.noarch.rpm

/hp/support/data/

root@linux:/tmp # /hp/support/tools/AppCollect

————————————————————

HP ConvergedSystem for SAP HANA

/hp/support/tools/AppCollect

Version: 3.2

Author: Bill Neumann – SAP HANA CoE

▒ Copyright 2013 Hewlett-Packard Development Company, L.P.

————————————————————

Please enter the sidadm user name: lp0adm

Phase 1: Checking supportutils installation.

Phase 2: Getting IML event log. This may take up to 5 minutes.

Phase 3: Getting ILO event log. This may take up to 5 minutes.

Phase 4: Getting BIOS power settings data.

Phase 5: Getting system survey data. This may take up to 10 minutes.

Phase 6: Getting SAP version information.

Phase 7: Getting supportconfig data. This may take up to 30 minutes.

Phase 8: Getting SAP trace files.

Phase 9: Wrapping up data collection. This may take a few minutes.

Done collecting data, please provide /hp/support/data/DL580.linux_202001151507.tgz to HP Support.

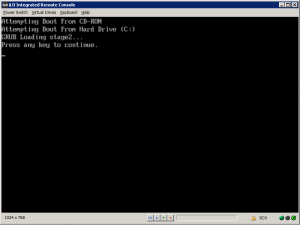

RHEL 5 booting stuck – GRUB Loading stage2… Press any key to continue

I have a HPE Proliant DL380 G7 that is running Red Hat Enterprise Linux 5 that was stuck on the following message

Removed the section shown in red on file /boot/grub/grub.conf

root@rhel5:/boot/grub # cat grub.conf

# grub.conf generated by anaconda

#

# Note that you do not have to rerun grub after making changes to this file

# NOTICE: You have a /boot partition. This means that

# all kernel and initrd paths are relative to /boot/, eg.

# root (hd0,0)

# kernel /vmlinuz-version ro root=/dev/rootvg/rootlv

# initrd /initrd-version.img

#boot=/dev/cciss/c0d0

default=0

timeout=5

#splashimage=(hd0,0)/grub/splash.xpm.gz

#hiddenmenuserial –unit=0 –speed=115200

terminal –timeout=10 console serialtitle Red Hat Enterprise Linux Server (2.6.18-406.el5)

root (hd0,0)

kernel /vmlinuz-2.6.18-406.el5 ro root=/dev/rootvg/rootlv rhgb noquiet crashkernel=128@16M log_buf_len=3M elevator=noop nmi_watchdog=0

initrd /initrd-2.6.18-406.el5.img

title Red Hat Enterprise Linux Server (2.6.18-308.16.1.el5)

root (hd0,0)

kernel /vmlinuz-2.6.18-308.16.1.el5 ro root=/dev/rootvg/rootlv rhgb noquiet crashkernel=128@16M log_buf_len=3M elevator=noop nmi_watchdog=0

initrd /initrd-2.6.18-308.16.1.el5.img

title Red Hat Enterprise Linux Server (2.6.18-308.el5)

root (hd0,0)

kernel /vmlinuz-2.6.18-308.el5 ro root=/dev/rootvg/rootlv rhgb noquiet crashkernel=128M@16M log_buf_len=3M elevator=noop nmi_watchdog=0

initrd /initrd-2.6.18-308.el5.img

You must be logged in to post a comment.